On the potential of Transformers in Reinforcement Learning

Summary

Transformers architectures are the hottest thing in supervised and unsupervised learning, achieving SOTA results on natural language processing, vision, audio and multimodal tasks. Their key capability is to capture which elements in a long sequence are worthy of attention, resulting in great summarisation and generative skills. Can we transfer any of these skills to reinforcement learning? The answer is yes (with some caveats). I will cover how it’s possible to refactor reinforcement learning as a sequence problem and reflect on potential and limitations of this approach.

Warning: This blogpost is pretty technical, it presupposes a basic understanding of deep learning and good familiarity with reinforcement learning. Previous knowledge of transformers is not required.

Intro to Transformers

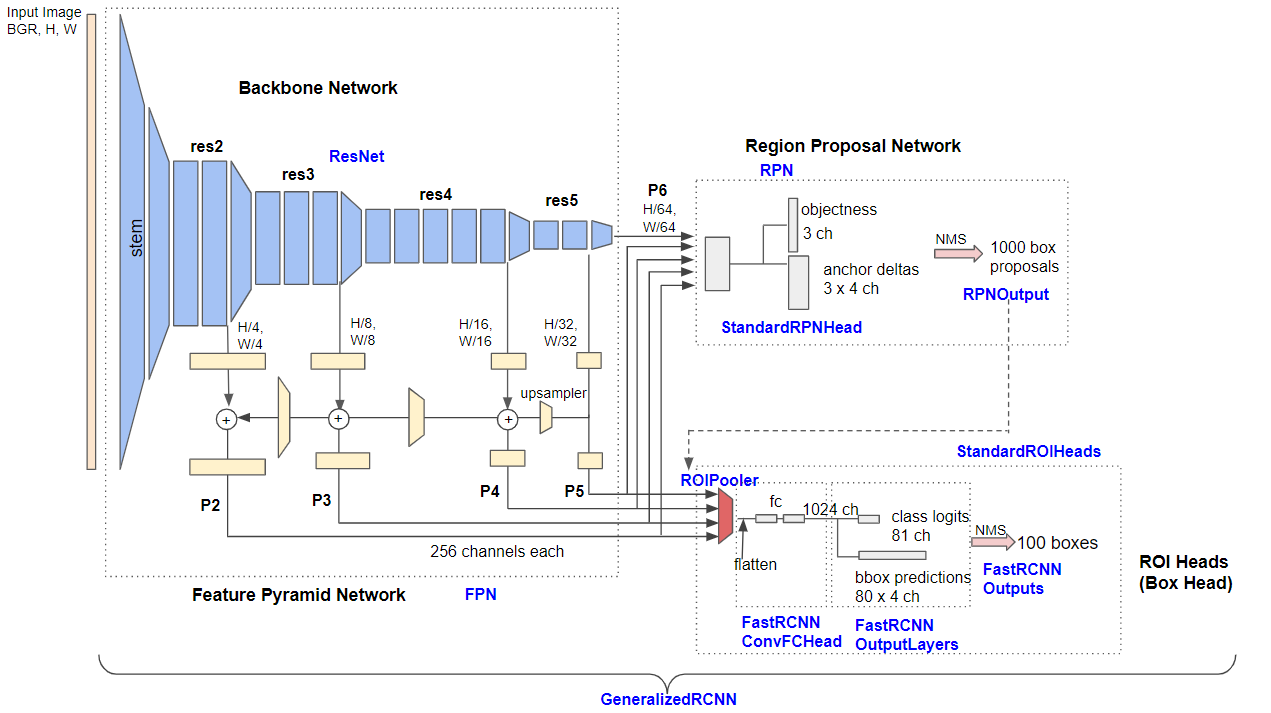

Introduced in 2017, Transformers architectures took the deep learning scene by storm: they achieved SOTA results on nearly all benchmarks, while being simpler and faster than the previous overengineered networks and scaling well with additional data. For instance by getting rid of convolutional network (CNNs) we can go from the complex Feature Pyramid Network Base RCNN of Detectron2, which uses the old fashioned anchor boxes of classic computer vision,

(Feature Pyramid Network Base RCNN Architecture, image taken from this nice blogpost)

(Feature Pyramid Network Base RCNN Architecture, image taken from this nice blogpost)

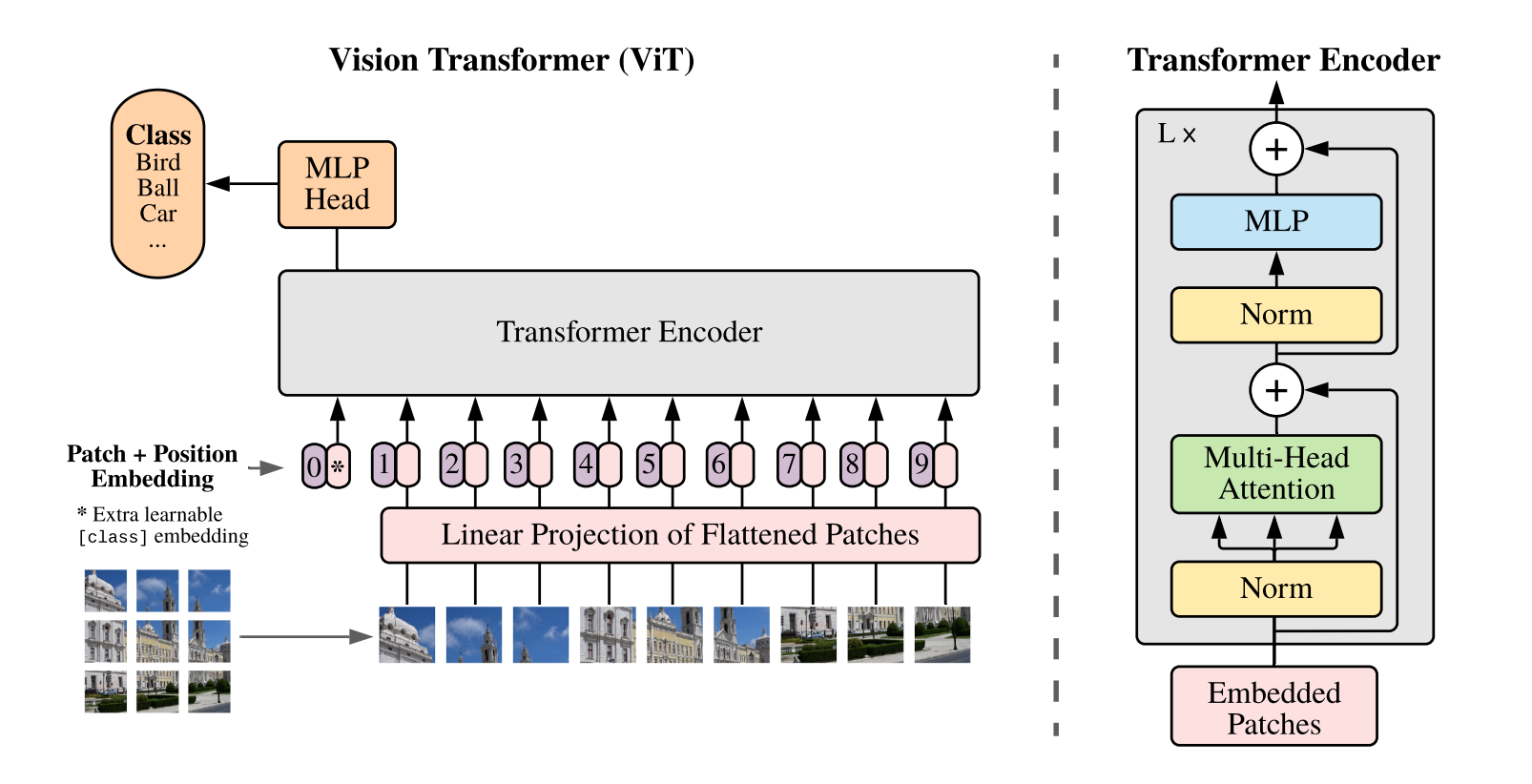

to a transformer model based on embedding vectors extracted from the image patches and few hidden layers.

(ViT Architecture, all credits to the original paper)

(ViT Architecture, all credits to the original paper)

Similarly, in the NLP domain, transformers get rid of recurrent neural networks (RNNs) and are able to handle sequences as a whole, rather than sequentially. The key are the self-attention and multi-head attention layers, which roughly tell the network how elements in a long sequence correlate with each other, so that for every element (say a word, or a pixel) we know where we should pay attention to. The best example is in text translation: when translating “piace” in “Mi piace questo articolo”, the transformer needs to also pay attention to the word “Mi” to correctly translate it into “I like this article”, while there is no need to pay attention to the other words. A similar proposition such as “Le piace questo articolo” translates instead into “She likes this article”.

The transformer architecture is usually divided into encoder and decoder modules. The encoder is responsible for taking the input data and building representations of its features, usually as an embedding vector. The decoder takes these embeddings as input and generates new sequences. Depending on what we need to do, we may only need one of these modules. Encoder-only architectures are used for tasks requiring understanding of the input, such as sentiment analysis and sentence classification. Deconder-only models are generative models, for instance text or image generation. Encoder-Decoder architectures are suited for text translation and summarisation.

Famous networks of each category include:

- Encoder-only: BERT, RoBERTa, ALBERT, ViT

- Deconder-only: GPT2, GPT3

- Encoder-Decoder: BART, T5, DETR

The de-facto standard to play with transformers is the hugginface library, which contains excellent documentation which I encourage you to check. The next big-thing for transformer architectures in supervised/unsupervised learning is likely to be multimodal transformers: networks able to ingest text, audio, images and more at the same time.

RL Transformers

We usually model reinforcement learning problems as a Markov decision process described by sequences of actions, states, transition probabilities and rewards. At every step a typical algorithm would check only the current state and the past performances encoded in a state or state-action value function to decide which action to take next. There is no notion of “previous-action”, a Markov process doesn’t care (or better, it doesn’t remember).

The key hypothesis behind RL transformers is: what if instead we take the whole sequence as the building block to base our prediction? If we do so, we mapped a 1-step Markovian problem into a sequence-to-sequence or sequence-to-action problem, which we can approach using battle tested supervised learning techniques. No value functions, no actor-critics, no bootstrapping.

Sequences are usually called trajectories in RL literature, so we will use these terms interchangeably. We will assume that trajectories are already given before-hand, so we will limit ourselves to offline-reinforcement learning. These trajectories may have been produced by another RL agent or they may be expert (human) demonstrations.

RL with transformers has been recently explored in the paper Offline Reinforcement Learning as One Big Sequence Modeling Problem and Decision Transformer: Reinforcement Learning via Sequence Modeling.

In the former work they first use a GPT-like architecture to ingest trajectories composed by actions, states, rewards

$$ \tau = (s_1, a_1, r_1, s_2, a_2, r_2, \dots ) $$

and to generate novel candidate trajectories. The setup is nearly identical to the one that we would use in natural language processing. Finally they apply a beam-search strategy to planning, by iteratively expanding the most promising trajectories weighted by cumulative returns until a single trajectory stands out as the most promising. To avoid being too greedy they also augment trajectories with the reward-to-go $R_t$ (future cumulative reward) at each step.

$$ R_t = \sum_{t’ = t}^{T} \gamma^{t’-t} r_{t’} $$

In the latter work they get rid of rewards at each time step and only consider the reward-to-go, directly predicting the next action given the state and a target final return.

$$ \tau = (s_1, a_1, R_1, s_2, a_2, R_2, \dots ) $$

This very simple approach is reminiscent of upside-down reinforcement learning, that is instead of asking the agent to search for an optimal policy, just ask it to “obtain so much total reward in so much time”. In essence we are doing supervised learning, asking the agent to extrapolate based on past trajectories which trajectory will lead to the highest rewards. Actually it’s possible to condition the agent to achieve arbitrary rewards (among the ones achieved in the training dataset). This can be handy if intermediate levels of rewards are still meaningful, for instance last year I used this technique to teach a food scooping robot (before knowing that upside-down RL had a name) to pick different portion sizes, having set the exact weight of scooped food as a positive reward. Basically the robot trains to scoop as much as possible, but if we ask for a small portion it should be able to do that!

From what I wrote so far you may be thinking that this is pretty much behaviour cloning on transformers steroids… and you would not be that wrong! Indeed the authors of Decision Transformer also recongnised this and benchmarked against vanilla behaviour cloning (by the way, props to how they divided their discussion session in clearly articulated questions). The conclusion is that, even though performance are often comparable, the transformers approach do outperform vanilla behaviour cloning if there is a large amount of training data.

How do RL transformers perform overall? Pretty well, they are often on par or better than state of the art TD-learning model-free offline methods such as Conservative Q-Learning (CQL) on benchmarks like Atari and D4RL!

On Atari they have been tested on a DQN-replay Atari dataset, performing on par or better in Breakout, Pong and Seaquest, while worst on Qbert. On D4RL (an offline RL benchmark using OpenAI Gym) they have been tried on continuous tasks such as HalfCheetah, Hopper and Walker being again on par with state of the art methods. Tests on a Key-to-Door environment, in which a key must be picked well in advance of reaching the final door, also shows very good long-term credit assignment capabilities. Tests on modified D4RL benchmarks in which the reward is given only at episode’s end shows that transformers perform well also in sparse reward settings, while the performance of CQL plummets.

The Good and the Hype

So, why is this whole thing interesting? The reasons are very pragmatic, there is the opportunity of transferring progress from very well funded fields (NLP, Vision for consumer applications) to many less wealthy fields (and often harder, e.g. robotics) out of the box. Battle tested architectures trained on large quantities of data can be reused, saving engineering time on RL algorithms (no need to bootstrap value functions, no actor-critic networks, etc.). These techniques may become the standard to pretrain agents. On a more technical level, RL transformers appear to be good at long-term credit assignment and in sparse rewards scenarios, which is very often what we face in real-world RL.

That said, these methods are not a panacea, for instance no serious benchmark has been tried on online RL, the “normal” RL. Knowing that current deep learning techniques tend to handle very poorly discrepancies from identically and independently distributed statistics, current transformers architectures may not be enough. Also, for specific domains ultimately it boils down to the availability of large datasets of expert trajectories, which in fields like robotics are still missing.

All in all, the idea is pretty fresh and there may still be large room for improvement. Onwards!

Leave a comment